Improving activation by cutting onboarding from 21 steps to one

Research and a 30-minute A/B test revealed that Intruder's carefully built onboarding flow was actively harming conversion - and established experimentation as a new norm in the process.

Context

There was internal consensus that the free trial experience could work harder, but nobody had looked closely at the data. Following a review of the marketing site's conversion performance, I took it upon myself to investigate.

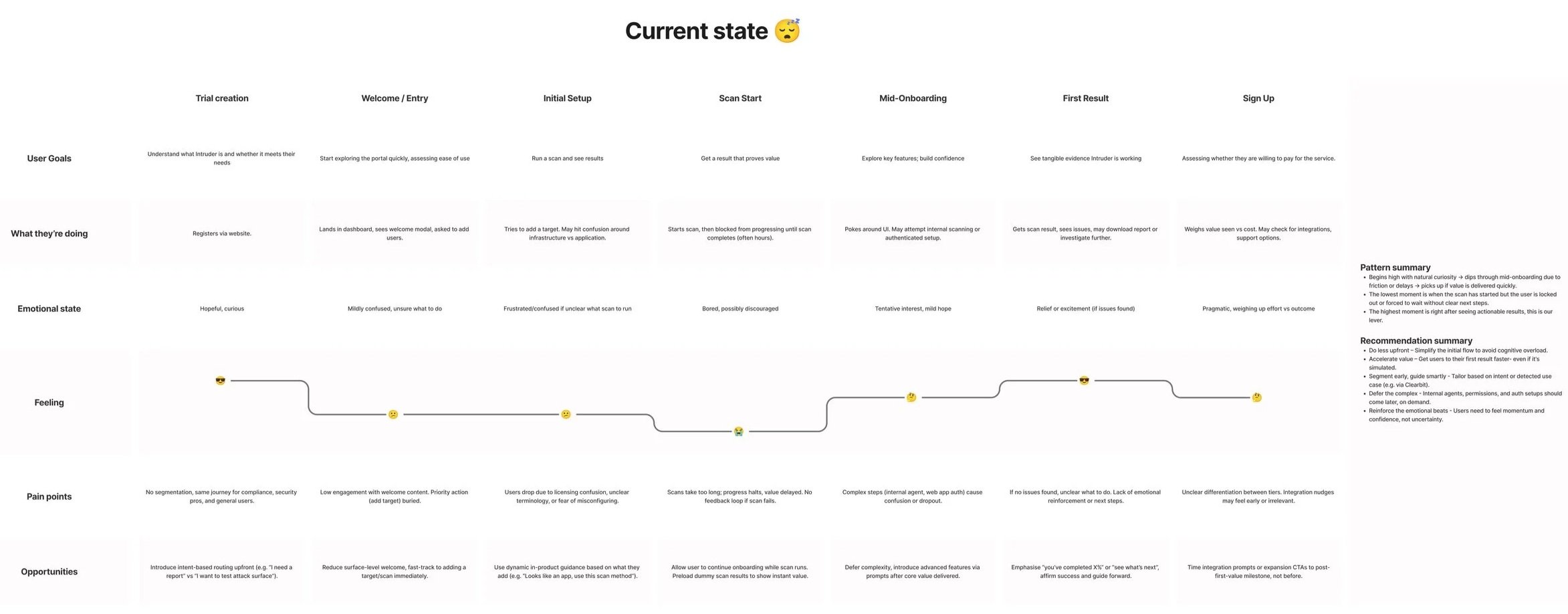

Intruder had a 21-step onboarding flow covering everything from adding colleagues to reviewing scan results. After investigating drop-off data, I found that fewer than 1% of trials were completing the whole thing. More than 50% weren't completing even the second step.

The problem

The 21-step flow walked users through adding colleagues, adding targets, starting a scan, configuring integrations, reviewing results, fixing vulnerabilities, and running a confirmation scan. The steps had been chosen based on a combination of correlation with activation and logical sequencing - well-intentioned, but built around assumed behaviour rather than observed behaviour.

Session recordings revealed that many users were skipping around the flow or ignoring it entirely. Interviews with recently activated customers surfaced three consistent patterns:

- Preference to roam - users consistently explored the platform themselves, consciously avoiding onboarding

- Inbuilt inertia - onboarding actually slowed customers down from getting to their job-to-be-done

- JTBD undervalued - the flow focused on features rather than the task customers were hiring Intruder to achieve

Research and insight

A competitive review of seven similar products found an average of ~9 steps, with a large minority having no formal onboarding flow at all. Both approaches prioritised momentum over instruction.

The 'Aha moment' - when trial customers reliably understood the value of Intruder - was when a vulnerability scan completed and returned issues. The biggest unavoidable obstacle was scan duration: on average ~71 minutes. A long time to keep someone engaged with a flow that was already losing half of them by step two.

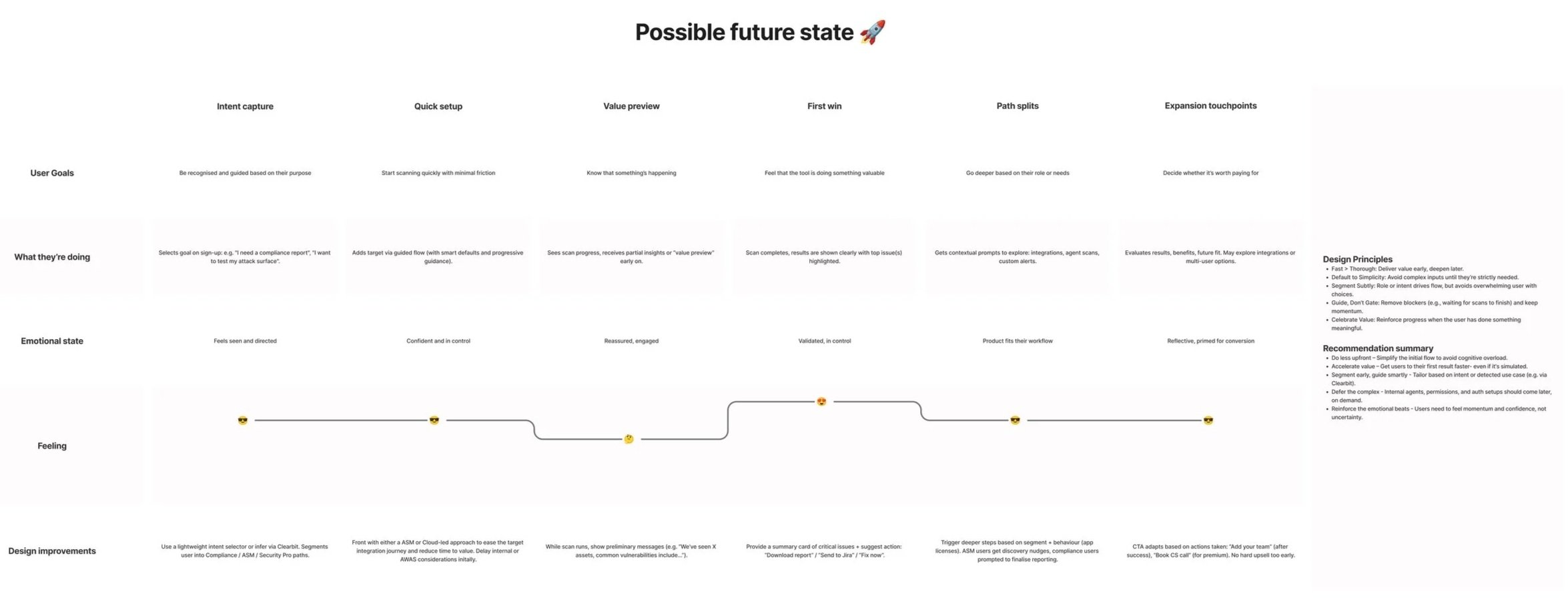

Strategic reframe

Internally, the preference was to condense the existing flow - seen as the safest option and aligned with what competitors were doing. I advocated for removing it entirely and testing that hypothesis before committing to a direction.

Intruder didn't have infrastructure for redirect tests, and the culture wasn't yet comfortable with experimentation at this scale. I spent a non-trivial period making the case - reframing the question not as "self-service vs. onboarding" but as: would removing the flow reduce conversion by more than 1%?

The test

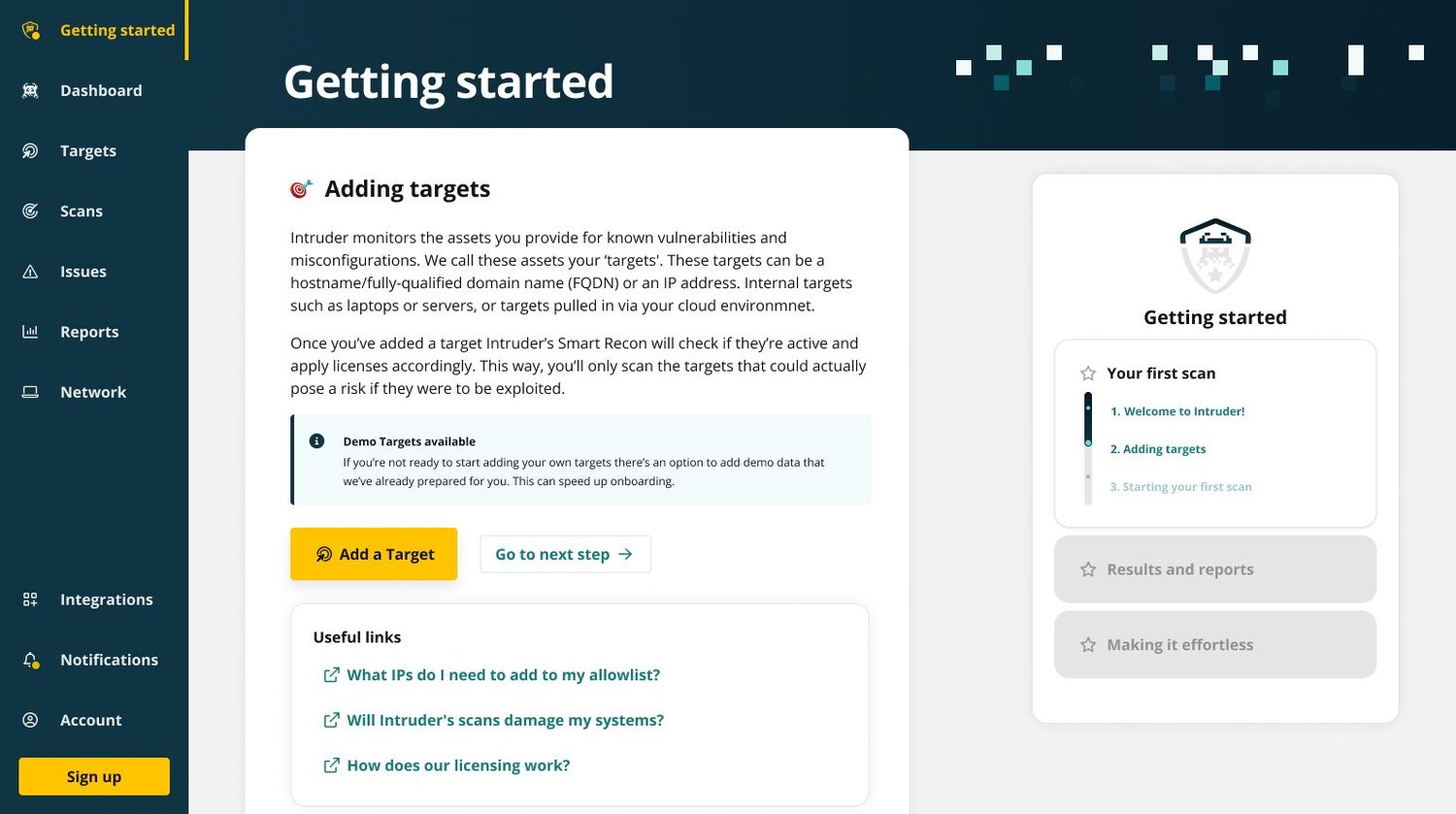

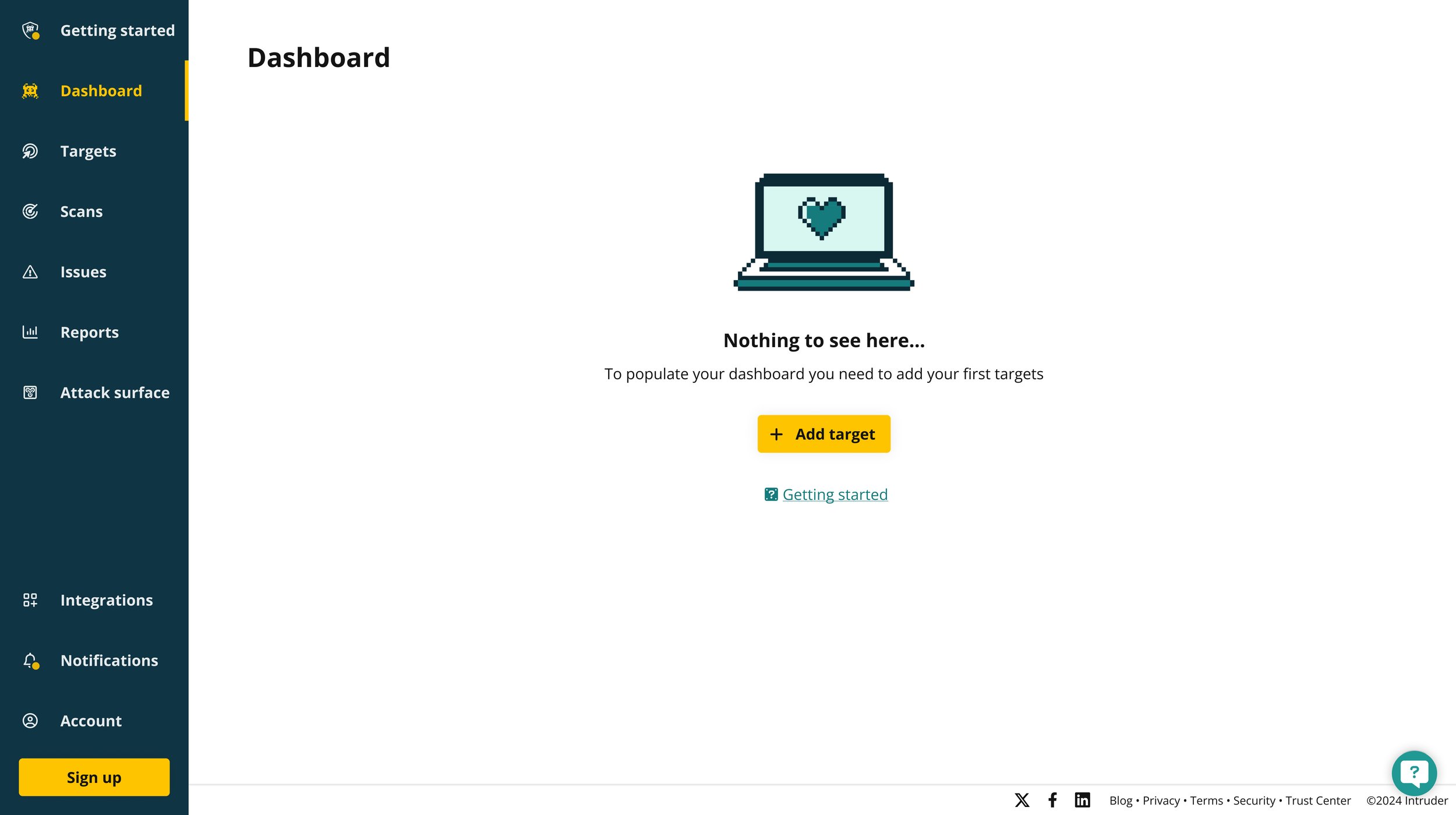

Once approved, the test took under 30 minutes to implement. For 50% of new trial customers, we redirected sign-in directly to the empty state of the dashboard - no onboarding flow, no changes to the empty state itself. The deliberately minimal intervention isolated the effect of removing onboarding entirely.

The test clearly validated that the existing flow was not suitable - and that reducing friction while preserving customer momentum drove meaningfully better outcomes.

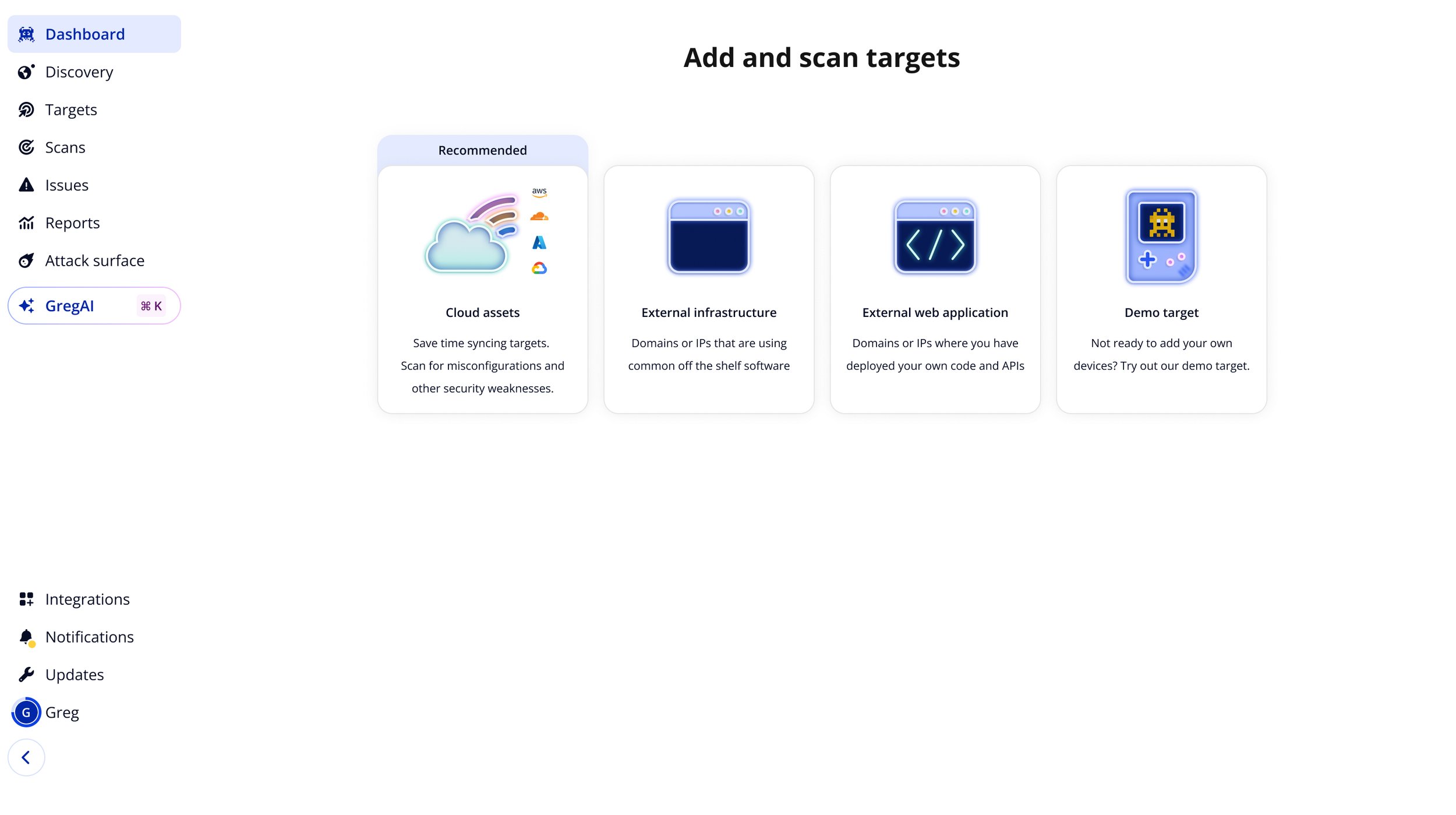

What came next

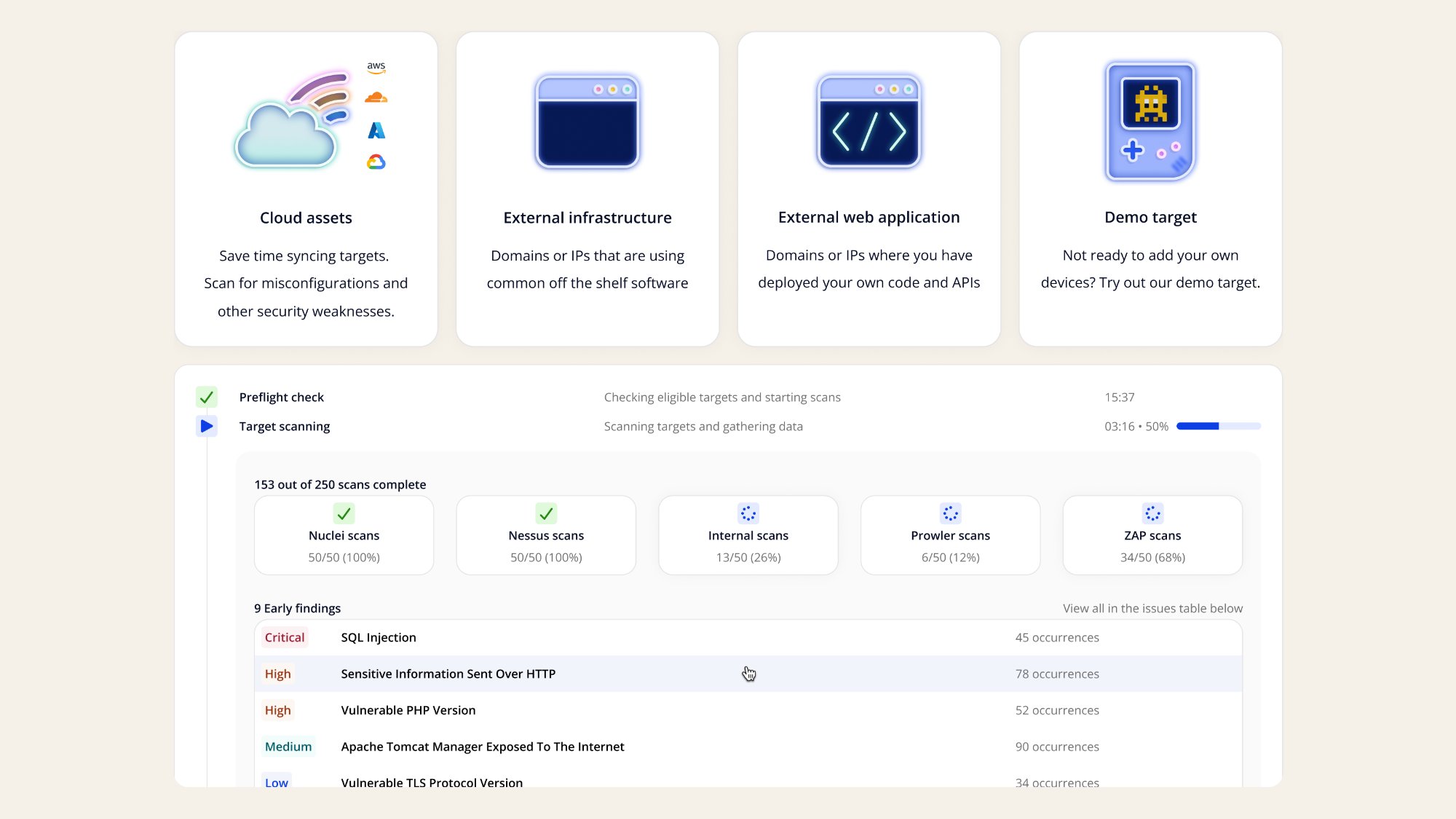

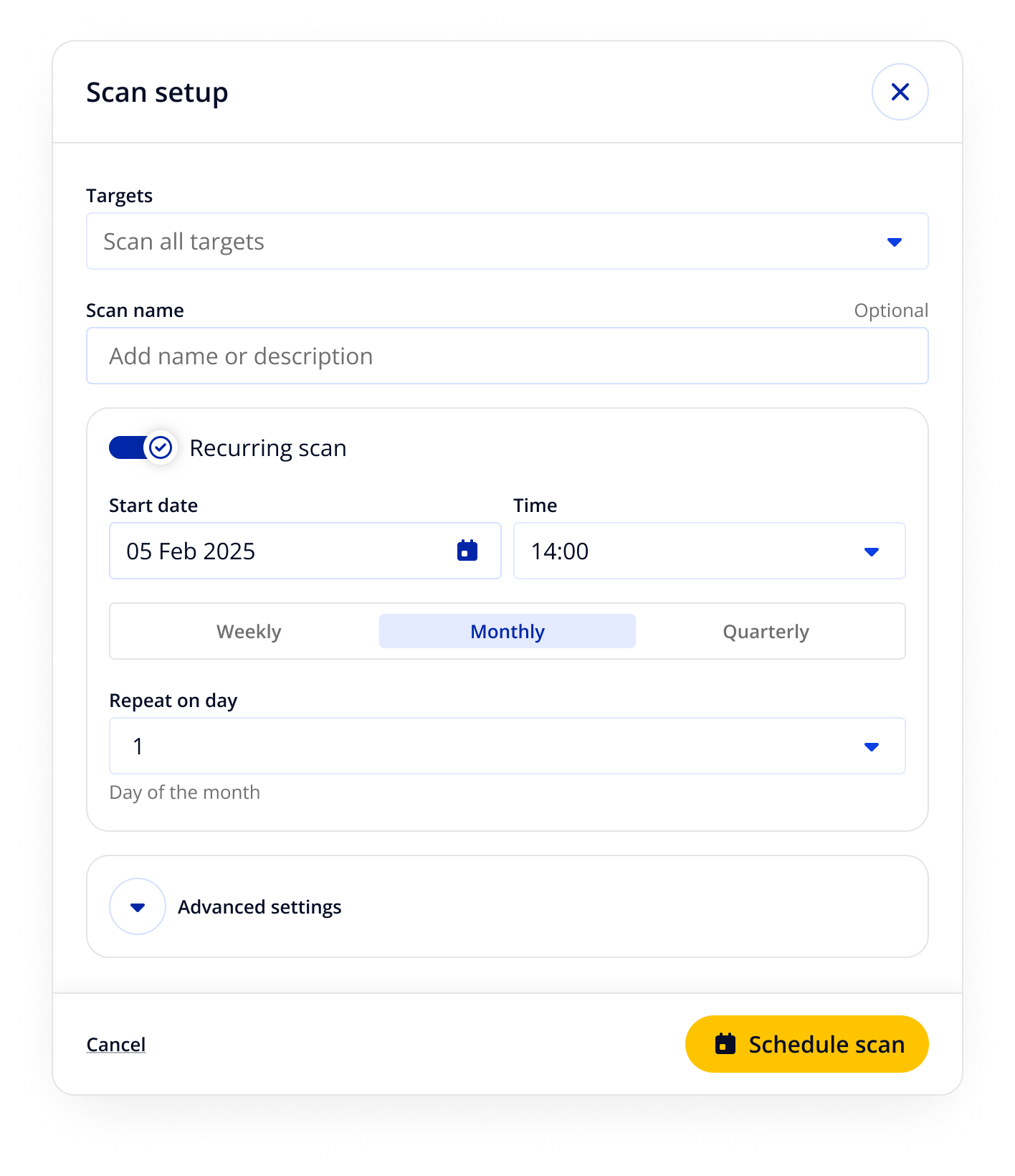

With a validated baseline, I mapped an idealised future state and scoped improvements in tiers by effort and impact. A new empty state gave users immediate, intent-based choices for how to start - cloud assets, external infrastructure, web application, or a demo target.

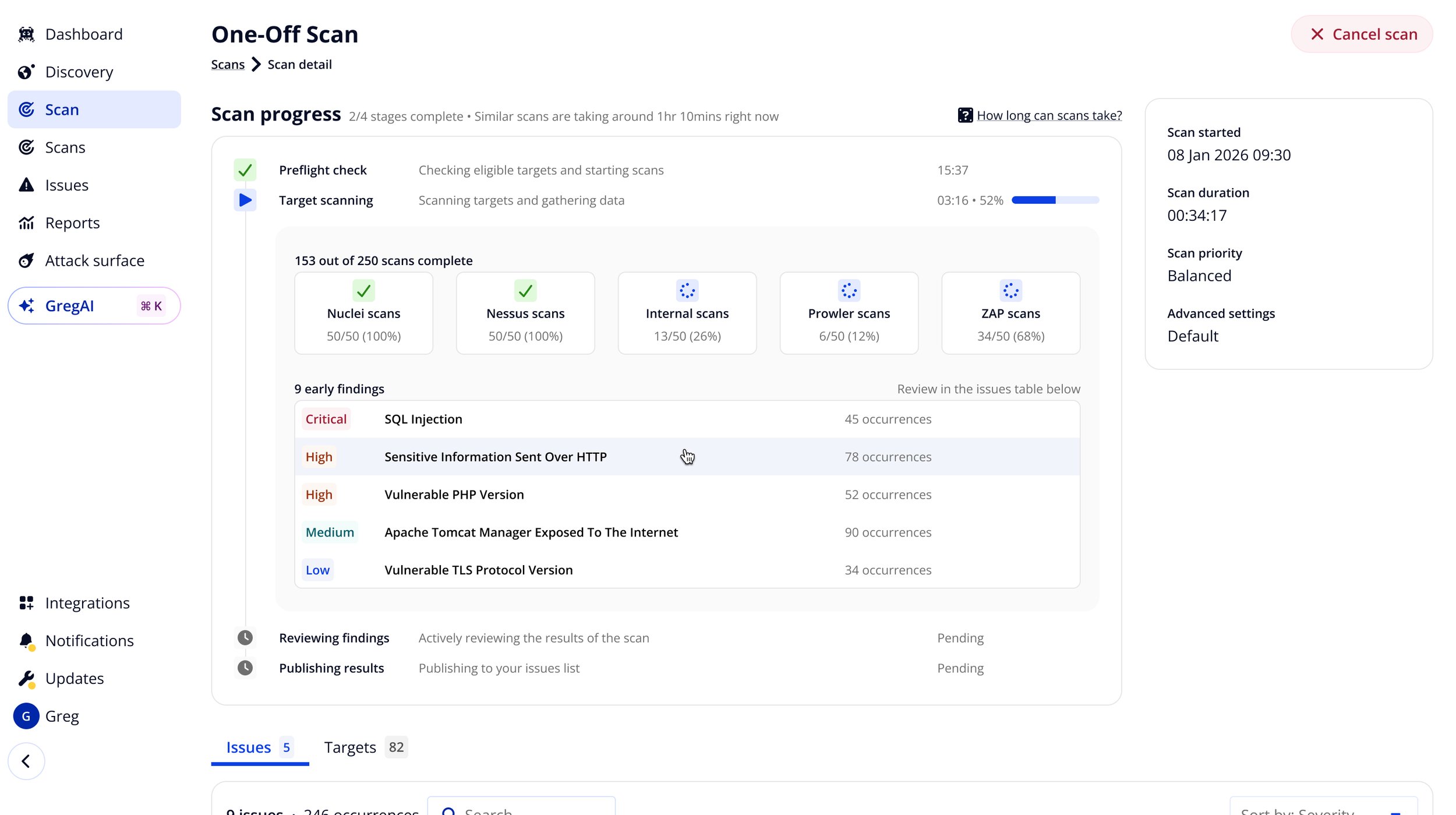

Early findings surfaced during the scan itself, reducing the ~71-minute wait for a result. A redesigned scan progress view showed live findings as they emerged, giving users something meaningful to engage with before the scan completed.

Outcomes

The test validated the direction. The redesigned empty state, early scan findings, and recurring scan defaults that followed drove further gains in the months after release:

Success for this project mainly came through customers adding increasing numbers of targets and subsequently licensing them when they signed up. This came through the emphasis on external targets, and specifically targets sourced from cloud platforms which we made more prominent as part of this work.

With a median customer ARR of roughly $3,100, even modest improvements in activation rate compounded quickly into meaningful revenue impact.

Reflections

The hardest part of this project was the advocacy, not the design. I knew from previous experience with A/B testing and growth teams that the experiment was worth running - but I was asking an organisation without a testing culture to bet on removing something they'd invested significant effort in building.

Getting that approval set a precedent that mattered beyond this project. The willingness to question a previous investment on the basis of evidence - rather than defending it - became a norm we could build on.

The time it took to run a scan remained the biggest structural constraint in the trial experience. This project led to scan duration becoming a metric that was monitored internally and actively managed as a result of this. Issue previews and scan milestone updates let us work around that constraint, giving customers meaningful signals of value before the full scan completed, rather than asking them to wait.